The Silent SIEM: Why Data ≄ Detection

A Post-Mortem on Stateless Logic, Schema Drift & Correlation Failure

SOC failures are rarely caused by missing tools or broken ingestion. More often, they happen when logic, schema, and execution reality drift apart. In this project, I investigated why enabled and "healthy" Elastic Security detections failed to trigger during a simulated Linux intrusion chain, despite telemetry being present. The outcome was a clear reminder of a core detection engineering truth: Data existing ≠ detection working.

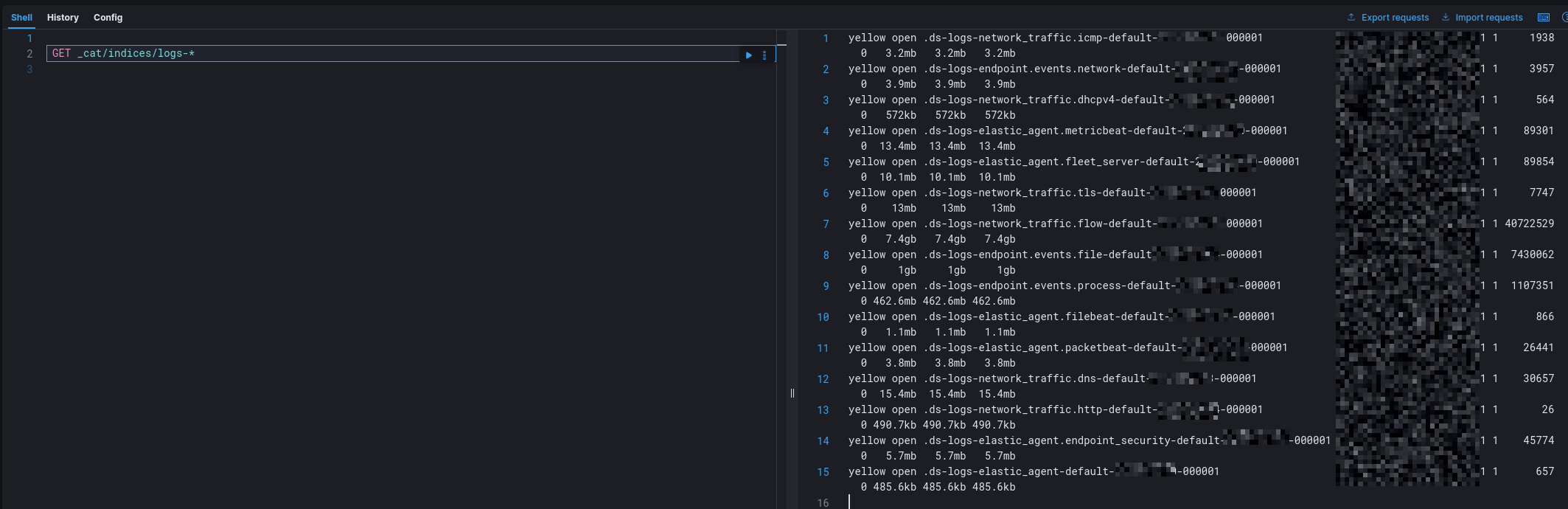

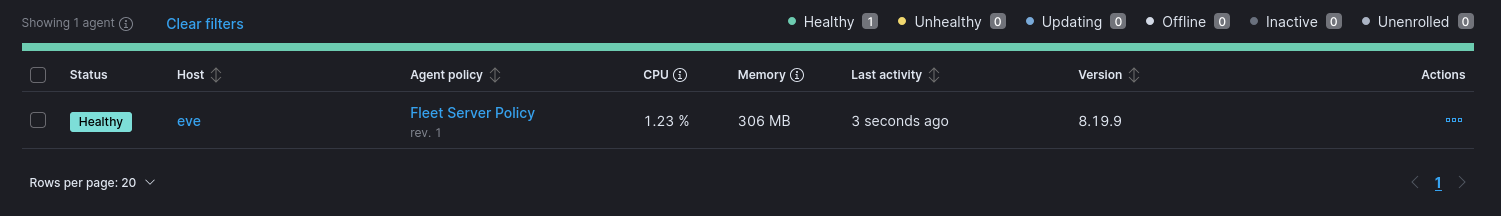

1: Telemetry Validation (Is the pipeline blind?)

Before testing detection logic, I validated ingestion end-to-end. Elastic Agent status: Healthy. Endpoint telemetry ingestion: Successful. Observed events included process execution (e.g., whoami) and outbound network activity (e.g., curl). Data was present in the logs-endpoint.events.* data stream.

Conclusion: the SIEM was not blind. The data existed.

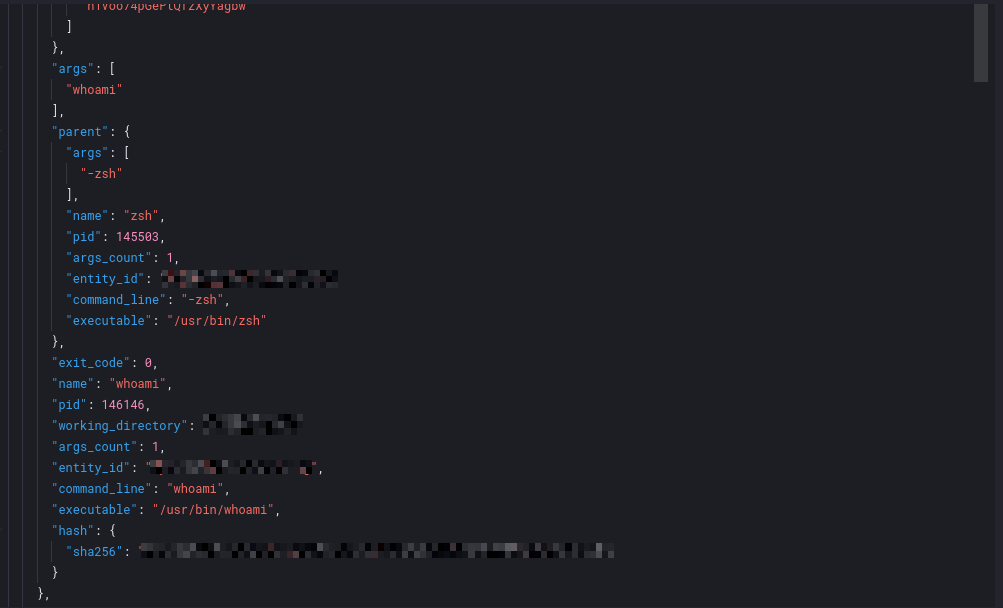

2: Attack Simulation (Does behavior produce signals?)

I executed a basic post-exploitation workflow to simulate a small intrusion chain:

- Identity: SSH authentication

- Discovery: execution of

whoami - Network activity: outbound

curlrequest

Each behavior occurred at the OS level and produced telemetry. Result; zero alerts.

3: Root Cause Analysis (Why the detections stayed silent)

1) Schema Mismatch: The SSH brute-force rule queried system.auth. A Dev Tools audit showed that the dataset never initialized; logs existed, but not in the schema the rule assumed. Lesson: a detection rule is only as effective as the data model it relies on.

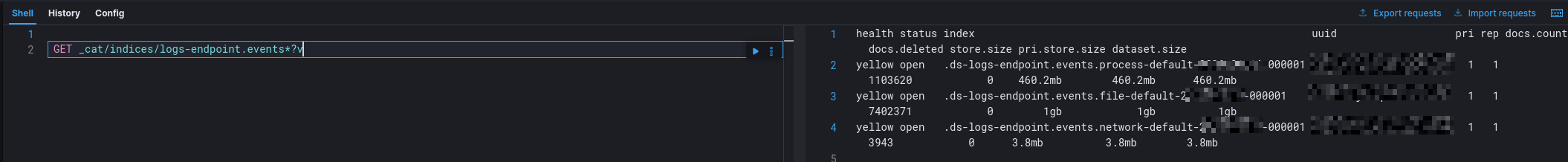

2) Field Semantic Brittleness: One process-based detection expected a specific parent executable. However, actual telemetry reflected different execution semantics (the shell interpreter instead). The detection failed not because the behavior was absent, but because the rule's assumptions didn't match real OS execution behavior.

3) Stateless Evaluation: Identity, process, and network telemetry existed, but remained siloed. Without state, time correlation, or entity linking, the SIEM evaluated each signal as low-fidelity noise. The system saw events; but it did not understand behavior.

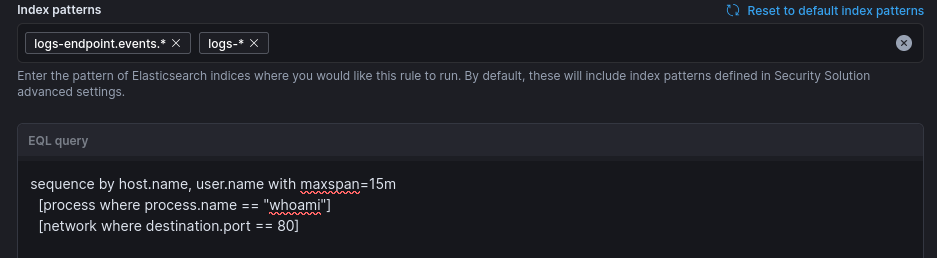

4: Engineering the Fix (Stateful EQL Correlation)

To close the gap, I attempted to replace point-in-time detections with a stateful EQL sequence. Instead of alerting on individual events, the goal was to correlate a temporal chain:

EQL

sequence by host.name, user.name with maxspan=15m

[authentication where event.outcome == "success"]

[process where process.name == "whoami"]

[network where destination.port == 80]

The logic is straightforward: when the chain is linked, isolated noise becomes a coherent intrusion lifecycle.

5: The Deeper Finding (Correlation still fails if the dataset is wrong)

When I tested the sequence, the correlation did not produce alerts; not because the concept was flawed, but because the first stage of the chain could not match. The authentication events required by the sequence were not present in the expected dataset (system.auth never initialized). As a result, the sequence could not complete, even though later stages (process and network telemetry) were visible.

This reinforced a critical point: Stateful correlation improves detection, but it still depends on correct, validated telemetry. If a sequence relies on fields or datasets that don't exist in the environment, correlation logic cannot compensate.

Conclusion

Detection coverage does not equal detection efficacy. Attacks unfold as stories, but many SIEM detections still evaluate sentences in isolation; and sometimes, they evaluate the wrong book entirely. Until detections retain state across identity, endpoint, and network telemetry and teams validate that the underlying data model matches real execution, silence will continue to look like safety.

Screenshots

Click any image to zoom.

See the quick summary on Instagram @maria.cybersec.